What You’ll Find in This Article…

The Hidden Price of AI Adoption That Nobody Puts on the Invoice — and the Complete Framework for Protecting What You Have Built

There is a conversation that happens in every AI vendor’s sales presentation that never makes it to the slide. The conversation about what happens to your data — your customers’ trust made tangible — once it enters the systems you are integrating into your business. This article has that conversation. Completely. Without the comfortable omissions. Here is what is inside:

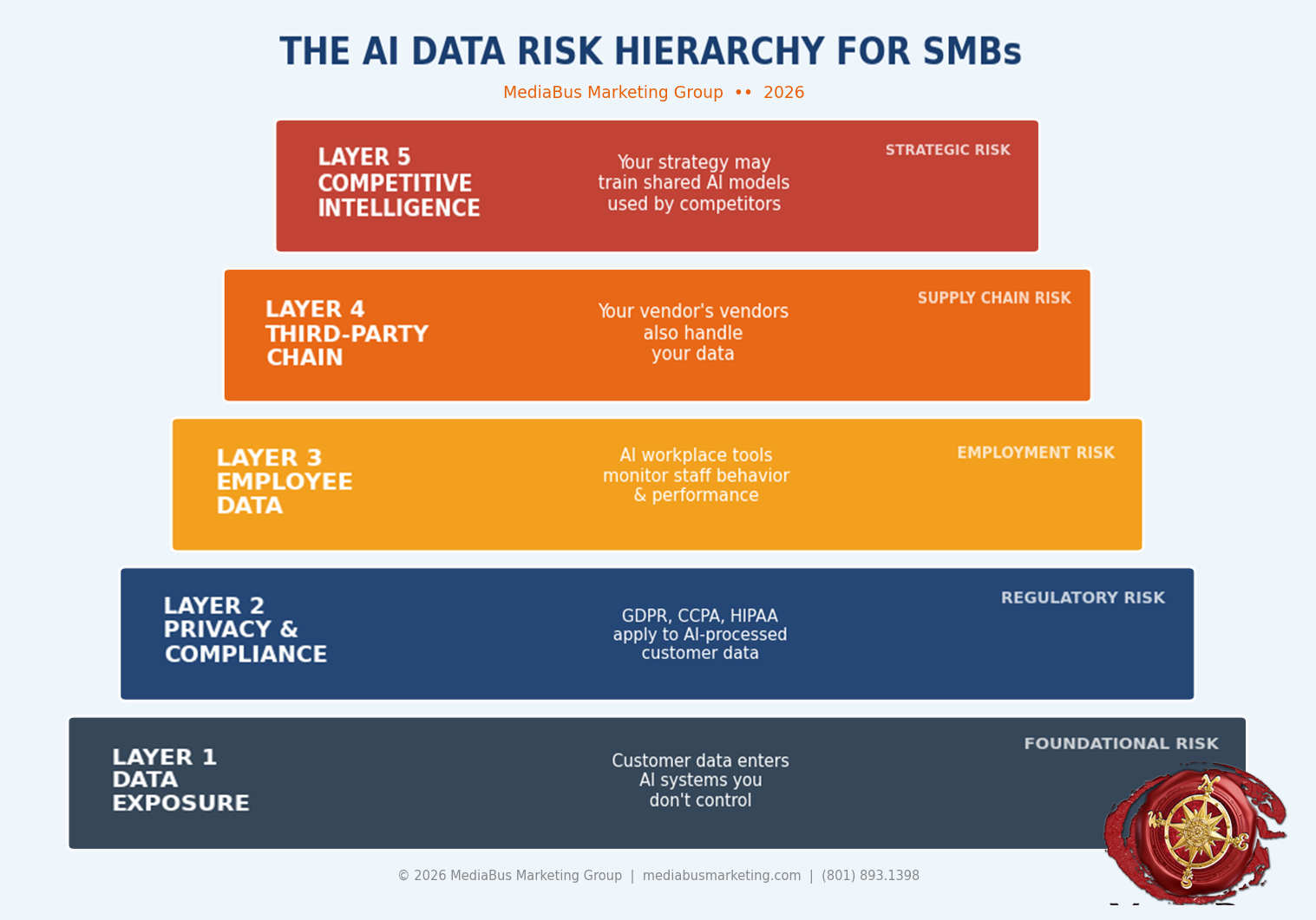

- The AI Data Risk Hierarchy — the five-layer risk model every small business must understand

- Where your customer data actually goes — the hidden flow most businesses never trace

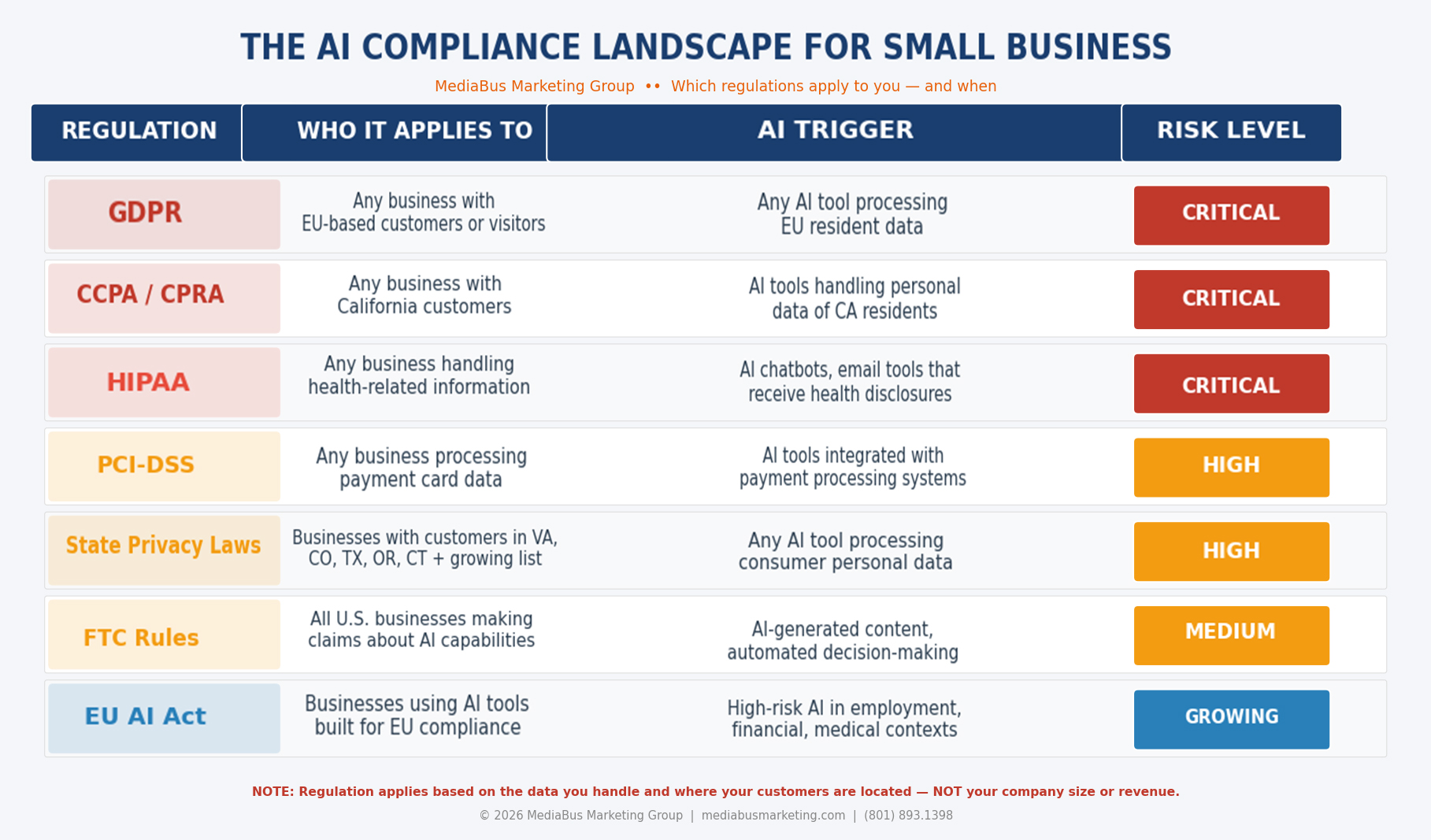

- Why “small business” is no longer a shield from privacy law — the regulatory reality that has changed completely

- The seven dimensions of AI data risk — each one specific, each one solvable

- The compliance landscape — which regulations apply to you right now and exactly why

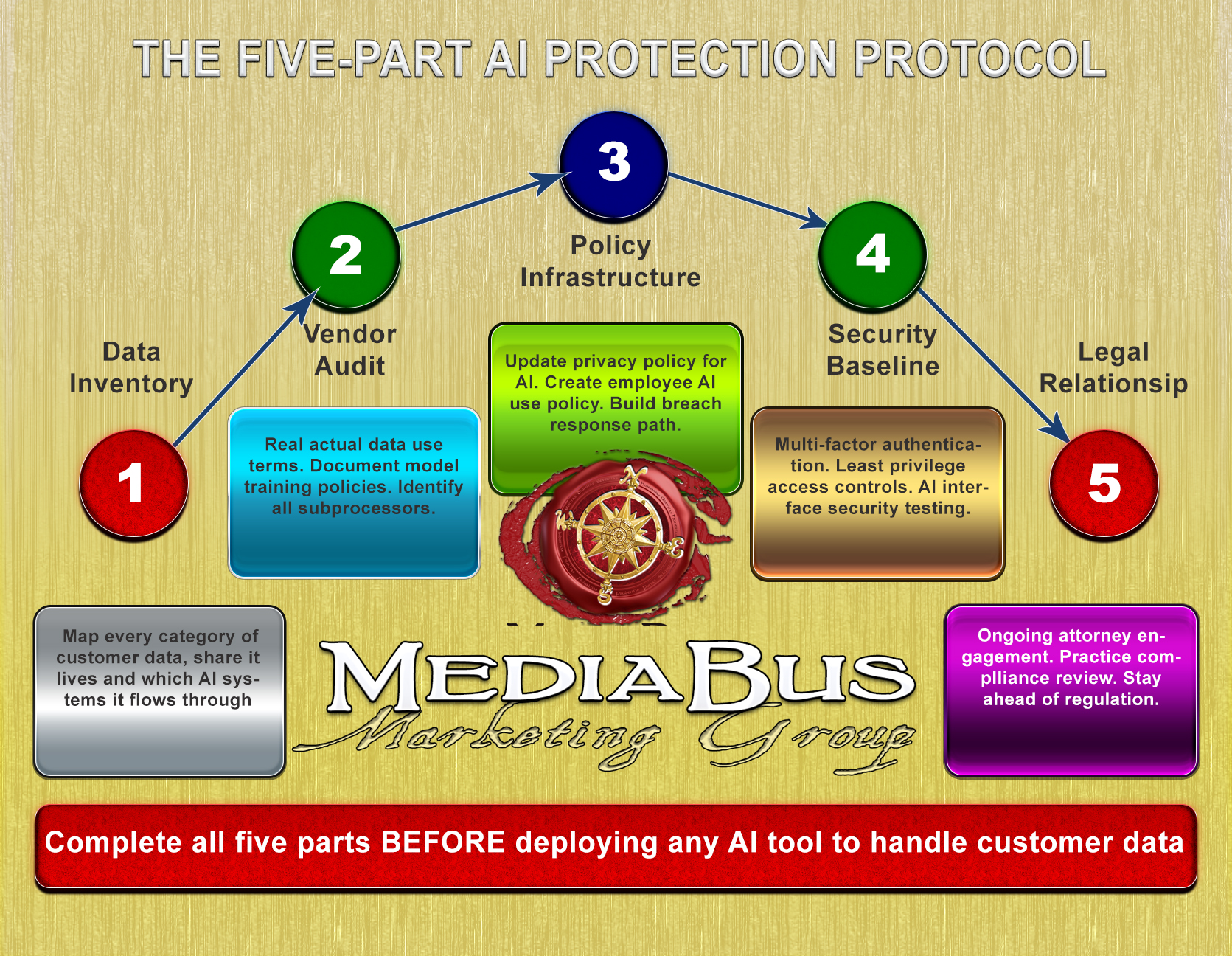

- The Five-Part Protection Protocol — the complete framework for deploying AI with integrity

THE CONVERSATION THAT CHANGES EVERYTHING

Picture in your mind’s eye a standard, hum-drum filing cabinet.

Not a metaphor. A real, steel filing cabinet — the kind that stood in the back office of every small business for the better part of a century. Inside that cabinet was everything. Customer names. Addresses. Purchase histories. Employee records. The accumulated intelligence of a business is built over years of relationship and trust.

That cabinet had a lock. You had the key. You knew who opened it. You knew what was left with them when they walked out.

Now, picture what happens the moment you integrate your first AI tool.

The cabinet does not disappear. But it gains a door you did not install — one that opens into a network of servers you do not own, processing systems you did not design, and data use agreements you may not have read carefully. The information that once sat behind a lock you controlled is now flowing through infrastructure built and operated by people you have never met.

This is not a reason to reject AI. It is a reason to understand it — precisely, specifically, and with the same care you brought to every other consequential decision your business has ever made.

Both things are true simultaneously. AI is the most powerful business development capability in the history of small business. And the data, security, privacy, and compliance dimensions of AI adoption are the most underestimated risks in that same history.

Hold both truths. Act on both. And you will build something that no competitor armed only with technology can touch.

THE SEVEN DIMENSIONS OF AI DATA RISK

DIMENSION 3 — THE PRIVACY OBLIGATIONS YOU CARRY AND MAY NOT KNOW ABOUT

There is a fiction that small businesses carry about privacy law: that it applies to large corporations with legal departments, not to businesses like theirs.

That fiction is not just inaccurate. In the age of AI, it is expensive.

GDPR: If your business collects data from individuals in the European Union, and in the digital economy, this happens the moment a European visitor fills out a form on your website, GDPR obligations apply to your business regardless of where your business is located or how small it is. With the way the E.U. is going after these regulations (Remember what they are doing with X and Facebook?), it would be wise to follow it if you do business abroad.

CCPA/CPRA: California residents have specific, enforceable rights over their personal data. If you have California customers, and the internet makes geographic containment essentially impossible, these obligations apply to you. Virginia, Colorado, Connecticut, Texas, Oregon, and a growing list of states have passed substantially similar legislation.

Industry-Specific Regulations: HIPAA governs any business handling health information. PCI-DSS governs any business processing payment card data through AI-integrated systems. These sector-specific regulations do not have a size threshold. They apply based on what you handle, not how large you are. These hit closer to home, and with anything that deals with HIPAA and, to a certain point, PCI compliance, if you are in breach, your company can suffer heavily with the penalties they can levy.

The little-known dimension most SMBs miss entirely: The arrival of AI has created a new category of privacy obligation: the obligation around automated decision-making. When your AI lead scoring system decides which prospects receive follow-up and which do not, several privacy frameworks — GDPR most explicitly — require transparency and, in some cases, human override capability. As AI becomes more deeply integrated, this dimension will become more prominent and more regulated.

DIMENSION 4 — THE COMPLIANCE LANDSCAPE MOVING FASTER THAN YOU THINK

Here is the reframe that changes everything about how you approach compliance:

Compliance is not your enemy. Compliance is the formalized expression of what your customers already expect from you. That you will handle their information responsibly, that you will use it only for purposes they reasonably anticipate, that you will protect it with appropriate care, and that you will be honest about what you are doing. On the other end of that dial, if you do not, it is easier than ever to breach computer systems that leave themselves unprotected.

If you do those four things, you are not just compliant. You are trustworthy. And in a marketplace where AI adoption is generating genuine consumer anxiety about data practices, trustworthiness is a competitive weapon. And if you don’t, you are in a world of hurt when the digital crap hits the fan.

What is actually happening right now:

The Federal Trade Commission has dramatically expanded its focus on AI-related deception and data practices. State privacy laws are proliferating at a pace that has made geographic compliance avoidance effectively impossible. The EU AI Act has established the world’s first comprehensive AI regulatory framework — already influencing how AI tools are built globally.

The hidden compliance trap that catches SMBs most frequently: The gap between what your privacy policy says and what your AI-integrated business actually does. A privacy policy written before AI tools were integrated does not accurately describe your current data practices — and operating under a legally binding description of your data practices that is factually inaccurate is precisely what regulators look for, and plaintiff attorneys exploit.

Updating your privacy policy to accurately reflect your current AI-integrated reality is not administrative housekeeping. It is legal protection.

DIMENSION 5 — THE EMPLOYEE DATA DIMENSION NOBODY DISCUSSES

Every conversation businesses are having about AI data and privacy focuses almost exclusively on customer data. This is dangerously incomplete.

When you don’t have your company’s protocols in place to not only protect your company, but also your company’s Intellectual Properties, then the “Brainstorming” with the free version of ChatGPT or Gemini can definitely become a liability. And that is the least of your concerns with how you and your employees use AI.

See, your employees are generating data through AI-integrated workplace tools at a scale and intimacy that has no historical precedent. AI productivity tools monitor work patterns. AI communication platforms analyze message content. AI project management systems track task completion and response times with a granularity that would have required a team of observers to produce manually a decade ago.

And if you do not have use limitations (who and what you include in the AI’s Applications) or data guidelines (non-siloed LLMs getting proprietary info) or even an established master prompt so all that is being done in your name is uniformed and working on the same plain as you need it to be then you aren’t ready for the AWESOMENESS of what AI can do for your company.

The dimension arriving faster than most businesses are tracking:

Employment law around AI monitoring is one of the fastest-evolving areas of labor regulation in the country. Several states have passed or are actively considering legislation requiring employers to disclose when AI tools monitor employee performance or influence employment decisions. The EU AI Act explicitly classifies AI in employment contexts as high-risk.

The Overcome: Audit the AI tools in your business for their employee data collection practices with the same rigor you apply to customer data. Establish a written AI use policy that informs employees about the tools in use, the data those tools collect, and how that data influences decisions that affect them.

DIMENSION 6 — THE THIRD-PARTY RISK CHAIN

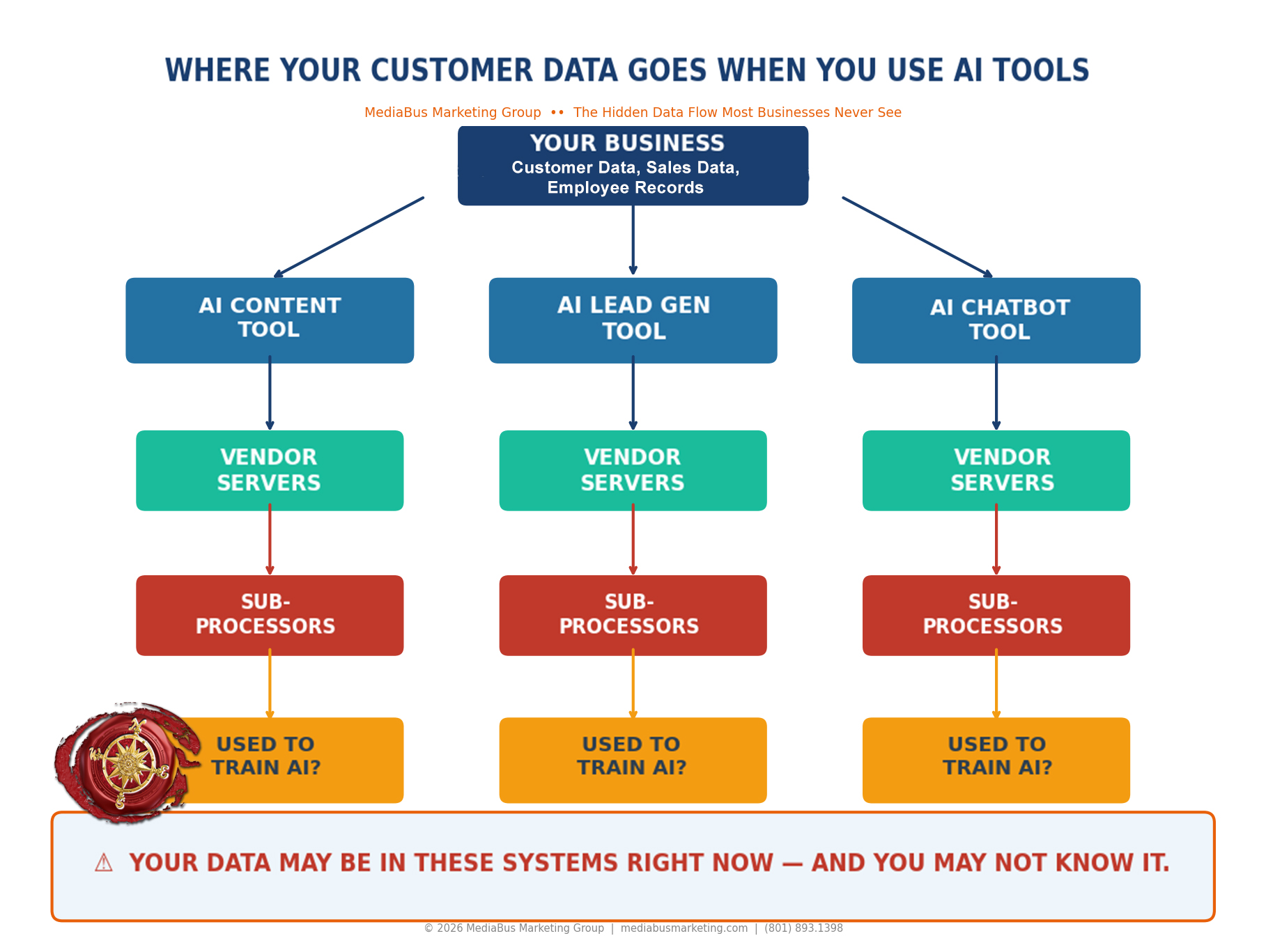

When you integrate an AI tool, you are not just accepting the data practices of that tool’s direct provider. You are accepting the data practices of every vendor, every subprocessor, and every infrastructure partner in that tool’s entire supply chain — whether you know who they are or not.

The fundamental principle of stewardship: When you accept something into your care, you are responsible for its fate regardless of whose hands it subsequently passes through. Your customers consigned their data to you — not to your AI vendor’s cloud infrastructure provider. The obligation that stewardship creates does not diminish with the complexity of the chain it travels through.

The Overcome: Request from every significant AI vendor a copy of their subprocessor list. Require vendor contracts that include data processing agreements addressing subprocessor obligations explicitly, breach notification requirements with specific timelines, and your right to audit data practices.

DIMENSION 7 — THE COMPETITIVE INTELLIGENCE EXPOSURE

When you feed your business data into AI tools — your customer profiles, your pricing strategies, your highest-converting content frameworks, your competitive positioning — you are potentially contributing your strategic intelligence to systems that your direct competitors also use.

The mechanism: Many AI platforms improve their models using aggregated data from all users. When you use such a platform to develop your best sales approach, that strategic intelligence may contribute to a shared learning system that your competitors — using the same tool — benefit from.

The Overcome: Establish a clear, documented distinction between generic operational content — which may appropriately flow through shared AI platforms — and proprietary strategic intelligence — which should be processed only through AI systems with contractually guaranteed data segregation.

THE FIVE-PART PROTECTION PROTOCOL

Every risk below is addressable. None requires a legal department. All require decision, discipline, and the recognition that your customers’ trust is the irreplaceable asset it actually is.

The Five-Part AI Protection Protocol: The sequential framework for deploying AI with integrity — Data Inventory, Vendor Audit, Policy Infrastructure, Security Baseline, and Legal Relationship — with what each part requires and why the sequence matters.

The Five-Part AI Protection Protocol: The sequential framework for deploying AI with integrity — Data Inventory, Vendor Audit, Policy Infrastructure, Security Baseline, and Legal Relationship — with what each part requires and why the sequence matters.

Part 1 — The Data Inventory: Before you can protect what you have, you must know what you have and where it travels. Map every category of personal data your business holds — customer, employee, vendor. Document where it is stored, which AI systems it flows through, and what contractual protections govern its use at each point.

Part 2 — The Vendor Audit: For every AI tool currently connected to your operations, retrieve and read the full data use terms. Document whether each vendor uses your data for model training. Document their subprocessor relationships, breach notification procedures, and what happens to your data when you leave. Renegotiate or replace any vendor whose terms do not meet the standard your customers’ trust deserves.

Part 3 — The Policy Infrastructure: Ensure your privacy policy accurately describes your current AI-integrated data practices. Establish an internal AI use policy informing employees about monitoring and data collection. Create a data breach response plan — with named roles, documented timelines, and clear communication protocols — before a breach occurs, not during one.

Part 4 — The Security Baseline: Strong authentication on every AI-connected system. Least-privilege access controls on every AI integration. Security testing of every customer-facing AI interface. An incident monitoring capability detecting anomalous AI system behavior before it becomes a breach.

Part 5 — The Legal Relationship: Establish an ongoing relationship with a business attorney who understands AI, privacy law, and your industry. Not a one-time consultation. Not a document review after something goes wrong. A proactive advisory relationship that keeps your practices current as regulations develop — because the regulations are developing fast, and the cost of staying ahead of them is a fraction of the cost of catching up from behind.

THE BOTTOM LINE

There is a truth that has governed commerce since the first transaction was ever made between two human beings. It is older than every law, every regulation, every compliance framework. It will outlast every AI system ever built.

People do business with people they trust.

Your customers trust you with their names. Their contact information. Their financial data. Sometimes their health. Sometimes the most private details of their lives, oftentimes with much, much more, are shared in the context of a business relationship because you had built, through everything you had ever done in their presence, the belief that you were worthy of it.

AI does not change that trust. It tests it. Every AI tool you deploy. Every agreement you click through. Every decision you make about what data flows where — all of it is a test of whether the infrastructure of your business matches the character of the person who built it.

The businesses that win at AI are not the fastest. They are the most trusted. Trust converts at rates that technology cannot replicate and competitors cannot easily undercut.

DECIDE. RIGHT NOW. That this business of yours will honor the trust of your previous and present customers

You are the keeper of another’s confidence. And cannot betray it for any tool, any efficiency, or any shortcut that the world might offer.

At MediaBus Marketing Group, we help small businesses integrate AI with the strategic foundation, the ethical framework, and the practical protection protocols that make the integration worth celebrating rather than surviving. Because your customers’ trust is the most valuable thing your business owns. And we build AI strategies designed to grow it — not risk it.

Let us walk through your AI data practices together — map what is exposed, identify what needs to change, and build the protection framework that lets you use AI powerfully, responsibly, and with the full integrity your customers have always deserved.

CYBER SECURITY FAQs

FAQ 1 — What happens to my customers’ data when I use AI tools in my small business, and how do I know if it is being used to train AI models?

When you integrate AI tools into your business operations, your customers’ data — contact information, purchase history, communication records, and behavioral patterns — flows into systems operated by the AI vendor and potentially by that vendor’s own third-party subprocessors. What happens to it depends entirely on the data use terms of the specific tool you are using.

The critical question: does the vendor use customer data entered into their platform to train or improve their AI models? Many popular small business AI tools include provisions permitting this use. It is not prominently disclosed. It is typically embedded in the terms of service that most users accept without reading.

To find out: read the full terms of service and data processing agreement for every AI tool you currently use, searching specifically for language about model training, service improvement, data aggregation, and anonymization — because data that has been anonymized by the vendor is still data that originated from your customers. If the language is ambiguous, ask the vendor directly in writing and require a written response. If your customer data is being used to train models accessible to your competitors, either renegotiate the terms or choose a provider whose data practices match the responsibility your customers placed in you.

FAQ 2 — What privacy laws apply to my small business when I use AI, and how do I know if I am compliant?

The privacy laws applicable to your small business are determined not by your revenue, your employee count, or your years in operation — but by the data you collect, the individuals whose data you hold, and the states or countries where those individuals are located.

If you have customers in California, CCPA and CPRA obligations apply to those customers’ personal data regardless of your business’s size. If you collect data from individuals in the European Union — which any business with a publicly accessible website potentially does — GDPR obligations apply to your business. If you operate in healthcare, financial services, or education, HIPAA, PCI-DSS, and FERPA apply based on what data you handle, not how large your company is.

AI integration creates specific new compliance considerations beyond traditional data privacy: the obligation to disclose when automated AI systems are making decisions that affect individuals, and the requirement in some jurisdictions to provide human review capability for significant AI-driven decisions.

To assess your compliance position: conduct a data inventory mapping every category of personal data you collect and every AI system it flows through; have your privacy policy reviewed by a business attorney to confirm it accurately describes your current AI-integrated practices; and establish a process for responding to data rights requests within legally required timeframes.

FAQ 3 — What are the specific cybersecurity risks that AI creates for small businesses, and what is the minimum protection standard I should have in place?

AI creates four cybersecurity risks for small businesses that are meaningfully different from pre-AI risks.

Expanded attack surface: Every AI tool connected to your business systems is an additional access point. The compromise of an AI vendor’s infrastructure can expose every client system that vendor holds permissions in — including yours.

Prompt injection attacks: Malicious inputs crafted specifically to manipulate customer-facing AI interfaces into revealing sensitive information, bypassing restrictions, or taking unauthorized actions — a documented, actively exploited vulnerability.

Data exposure through vendor breach: The personal and business data stored or processed by your AI vendors is subject to the security practices of those vendors and their subprocessors, which you do not directly control.

AI-generated social engineering: Attackers are using AI to produce highly personalized phishing attacks using data about your business gathered from publicly accessible sources — including your own AI-generated content.

The minimum protection standard includes: multi-factor authentication on every system AI tools can access; least-privilege access on every AI integration; security testing of every customer-facing AI interface specifically for prompt injection vulnerabilities; a vendor security review for every AI tool with access to sensitive data; and an incident response plan defining what happens in the first 24 hours of a confirmed breach.

FAQ 4 — Can my competitors access my business strategy or customer insights through the AI tools we both use?

The honest answer is yes — under specific and common conditions that most small business owners have never been told to consider.

Many AI platforms improve their models using aggregated data from all users. When you use such a platform to develop your most effective sales approach, generate your highest-converting content, or refine your competitive positioning — and the platform uses those interactions to improve its shared model — the strategic intelligence embedded in your inputs contributes to a learning system that every other user of the platform benefits from, including your direct competitors.

The consequence is sharpest in industries with small practitioner communities — specialty trades, professional services, regional retail — where the learning from one exceptional performer can meaningfully elevate the performance of that performer’s direct competition.

The practical protection: establish a documented distinction between generic operational content — which may flow through shared AI platforms — and proprietary strategic intelligence — your best sales approaches, pricing logic, highest-converting content frameworks — which should be processed only through AI systems with contractually guaranteed data segregation or through internal AI deployments where no third-party model training occurs.

FAQ 5 — What should I do right now if I have already deployed AI tools without considering these data, security, and privacy dimensions?

Begin where you are. Not where you wish you had started. Begin immediately.

In the first week: Conduct a rapid audit of every AI tool currently connected to your business. For each tool, document what data it has access to, whether you have read its full data use terms, and whether those terms permit use of your data for model training. This audit does not require perfection. It requires honesty.

In the first month: Read the full data use and data processing agreements for every tool identified. For any tool whose terms permit model training on your customer data, contact the vendor to renegotiate or begin evaluating alternatives. Update your privacy policy to accurately reflect your current AI-integrated practices — with legal review. Implement multi-factor authentication on every system your AI tools can access.

In the first quarter: Conduct a full data inventory mapping of every category of personal data your business holds and which AI systems it flows through. Establish a data breach response plan. Build vendor security review into your standard AI onboarding process. And initiate the legal advisory relationship that transforms your compliance posture from reactive to proactive.

The businesses that work through this sequence discover something that consistently surprises them: building responsible AI data practices does not constrain their AI adoption. It is the foundation that makes their AI adoption worthy of the customers who trusted them before a single AI tool was ever turned on. And worthiness — earned and maintained and demonstrated consistently — is the competitive advantage that compounds without limit and that no technology, however powerful, can replicate or replace.